You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

USAF/USN 6th Gen Fighters - F/A-XX, F-X, NGAD, PCA, ASFS News & Analysis [2008- 2025]

- Thread starter Matej

- Start date

- Status

- Not open for further replies.

Depends. Will the neural network fly it's combat missions with a perfect record before the US hands over the entirety of it's nuclear deterrent to it?What could possibly go wrong with a killer-AI?

FighterJock

ACCESS: Above Top Secret

- Joined

- 29 October 2007

- Messages

- 6,692

- Reaction score

- 7,912

Will the neural network fly it's combat missions with a perfect record before the US hands over the entirety of it's nuclear deterrent to it?

And that truly scares me, the thought that an AI computer is in charge of the US nuclear deterrent. Nope, I hope that never happens.

- Joined

- 16 April 2008

- Messages

- 10,318

- Reaction score

- 17,229

Will the neural network fly it's combat missions with a perfect record before the US hands over the entirety of it's nuclear deterrent to it?

And that truly scares me, the thought that an AI computer is in charge of the US nuclear deterrent. Nope, I hope that never happens.

Nothing to worry about. Just have it play tic-tac-toe until it decides that nuclear war is futile and gives up.

(noughts and crosses to our British cousins)

FighterJock

ACCESS: Above Top Secret

- Joined

- 29 October 2007

- Messages

- 6,692

- Reaction score

- 7,912

Will the neural network fly it's combat missions with a perfect record before the US hands over the entirety of it's nuclear deterrent to it?

And that truly scares me, the thought that an AI computer is in charge of the US nuclear deterrent. Nope, I hope that never happens.

Nothing to worry about. Just have it play tic-tac-toe until it decides that nuclear war is futile and gives up.

(noughts and crosses to our British cousins)

Been watching WarGames too many times TomS?

- Joined

- 16 April 2008

- Messages

- 10,318

- Reaction score

- 17,229

Will the neural network fly it's combat missions with a perfect record before the US hands over the entirety of it's nuclear deterrent to it?

And that truly scares me, the thought that an AI computer is in charge of the US nuclear deterrent. Nope, I hope that never happens.

Nothing to worry about. Just have it play tic-tac-toe until it decides that nuclear war is futile and gives up.

(noughts and crosses to our British cousins)

Been watching WarGames too many times TomS?

How many is too many? Asking for a friend...

Software is your weapon system in any modern aircraft design. The F-35 has what, ten million lines of code? Errors definitely can cause problems that get pilots killed.

I don’t think NGAD is attempting a true UCAV - loyal wingman seems more likely to be a tethered platform that will extend sensor and EW coverage with less risk to the manned aircraft that likely also is the primary (possibly solitary) weapons carrier. I doubt any NGAD UAVs will be given an independent ability to fire on targets without direct human instruction, even if that instruction is just a yes/no on a touch screen or throttle. Current DoD policy is that a human must give all fire commands and presumably for the drone to be at all useful it will need a datalink to share information and accept commands like any other modern aircraft. Where the drone probably will be autonomous is in its maneuvering and emission control, which I suspect will be driven by the manned pilot specifying a particular behavior that is most useful to the mission (flying far forward, flying close formation, emitting/not emitting, engaging specific targets, etc). This is the kinda of thing already being worked on in much simpler terms with MALD-X/N; being able to modify their behavior on the fly to suit the needs of the launching aircraft.

AI is much more dangerous on the ground where it likely will eventually dominate internal security, economics, and even policy in the near future.

I don’t think NGAD is attempting a true UCAV - loyal wingman seems more likely to be a tethered platform that will extend sensor and EW coverage with less risk to the manned aircraft that likely also is the primary (possibly solitary) weapons carrier. I doubt any NGAD UAVs will be given an independent ability to fire on targets without direct human instruction, even if that instruction is just a yes/no on a touch screen or throttle. Current DoD policy is that a human must give all fire commands and presumably for the drone to be at all useful it will need a datalink to share information and accept commands like any other modern aircraft. Where the drone probably will be autonomous is in its maneuvering and emission control, which I suspect will be driven by the manned pilot specifying a particular behavior that is most useful to the mission (flying far forward, flying close formation, emitting/not emitting, engaging specific targets, etc). This is the kinda of thing already being worked on in much simpler terms with MALD-X/N; being able to modify their behavior on the fly to suit the needs of the launching aircraft.

AI is much more dangerous on the ground where it likely will eventually dominate internal security, economics, and even policy in the near future.

zebra159357

I really should change my personal text

- Joined

- 30 November 2018

- Messages

- 141

- Reaction score

- 481

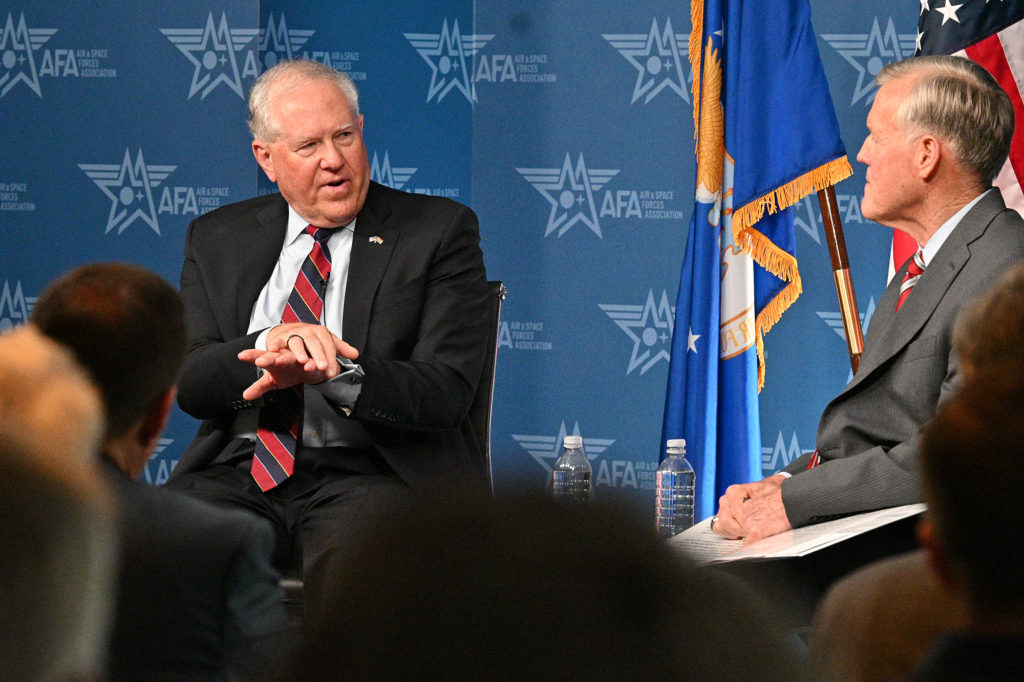

Kendall Dispenses With Roper’s Quick NGAD Rhythm; System is Too Complex | Air & Space Forces Magazine

The crewed Next Generation Air Dominance airplane won’t follow timetables calling for versions to be fielded every five years or so.

- Joined

- 21 April 2009

- Messages

- 14,203

- Reaction score

- 9,057

Materials & Manufacturing – Air Force Research Laboratory

Conspirator

CLEARANCE: L5

- Joined

- 14 January 2021

- Messages

- 325

- Reaction score

- 235

Ok so it took me a while to figure out how to properly explain it.

Think of an FPV drone. But a multi role military aircraft, that is exactly what I mean when I was saying that. Of course there will be some AI override and controls but I personally would want as little as possible. Sitting in a seat with all the controls at hand. With some sort of viewing software wether it be a screen or a headset. But they would have a 360deg view outside the aircraft with combat optics including targeting systems implemented into the screen as one entire HUD. Of course there would be some issues with this including latency and jamming/electronic hijacking that could occur but I’m sure there are plenty of implementable countermeasures that could be operated/installed

Think of an FPV drone. But a multi role military aircraft, that is exactly what I mean when I was saying that. Of course there will be some AI override and controls but I personally would want as little as possible. Sitting in a seat with all the controls at hand. With some sort of viewing software wether it be a screen or a headset. But they would have a 360deg view outside the aircraft with combat optics including targeting systems implemented into the screen as one entire HUD. Of course there would be some issues with this including latency and jamming/electronic hijacking that could occur but I’m sure there are plenty of implementable countermeasures that could be operated/installed

Come on, what's the worst that can happen? Some technicians trying to pull the plug once the network becomes self-aware, and the network initiating a nuclear exchange?Will the neural network fly it's combat missions with a perfect record before the US hands over the entirety of it's nuclear deterrent to it?

And that truly scares me, the thought that an AI computer is in charge of the US nuclear deterrent. Nope, I hope that never happens.

Nonsense.

I suggest we call it Skynet.

Back on the subject, what i read is that they are again going for bleeding edge same 'ol same 'ol business as usual. Isn't there enough tech on the table now for off the shelf engineering like in the b21? An upgraded 22 or 23 seems like it would suffice just fine and be cheaper so more could be bought.

DrRansom

I really should change my personal text

- Joined

- 15 December 2012

- Messages

- 714

- Reaction score

- 346

In my opinion, all the discussion about technological advances for NGAD are a waste. The next fighter needs to have:

- long range

- large payload

- ability to operate from wider variety of airfields to help in-theater dispersal

- decent (maybe supercruise?) speed to get around

- relatively low purchase and operating costs

Technology matters less than getting something with range and reasonable operating costs as quickly as possible. The idea of a super-fighter is untenable. There are simply too many new systems needed for the military and not enough time to sink another Trillion into a 10 year program.

- long range

- large payload

- ability to operate from wider variety of airfields to help in-theater dispersal

- decent (maybe supercruise?) speed to get around

- relatively low purchase and operating costs

Technology matters less than getting something with range and reasonable operating costs as quickly as possible. The idea of a super-fighter is untenable. There are simply too many new systems needed for the military and not enough time to sink another Trillion into a 10 year program.

And, hopefully, you realize that USAF tests are often notoriously biased towards the currently fashionable, big-budget option--always have been. When tests do not produce the intended result, moreover, all the services have a tendency to stop them, change the rules, and try again until they do.You do realize there has been an actual AI vs human dogfight test by the USAF and that the AI won all five times, right? AIs now can beat every chess master. How they beat every chess master isn’t particularly relevant. In an era of terabyte thumb drives I’m confident every piece of aerial combat history can cheaply reside in any given drone.

The "loyal wing man" concept itself may be just such a politically motivated attempt at institutional self-protection. Politicians and vendors trumpet the potential of remote- and software-controlled drones as cheaper, politically less sensitive replacements for manned aircraft. So the traditional air force flyboys coopt the technology and write a requirement that makes it a mere adjunct to the flesh-and-blood aviator.

That said, my point was not which technology wins, but what the technology in question actually is. At present and for the foreseeable future, "AI" is a marketing pitch, not a reality. Whether a human pilot in an actual aircraft cockpit loses a dogfight to a human pilot flying remotely from a control console or through the software he writes is thus immaterial.

But that isn’t even probably where loyal wingman is going initially. It seems far more likely to me that they will act as stand off sensor and EW platforms that have a much less demanding role of holding formation forward of the manned aircraft and providing target info, cover jamming, and if necessary, serve as decoys. They might also have a short range A2A capability eventually but I suspect initially their role will be more conservative. This is easily within the capability of current tech…an AI with a MADL will be given a behavior directive by the manned platform (recon/decoy/pit bull, etc) and it will operate within those directives even if the link is cut. This isn’t as challenging as being a stand alone offensive platform with no human input; it’s basically just a combat Rumba.

The idea that technical change must inevitably mark an advance in capability is fallacious. I have spent most of my working years in the computer industry, almost half of it in storage, plus a couple of years working on an AI-assisted "BigData" project. Advancing technology creates as well as solves problems. Fast, cheap, persistent storage has meant that much more gets stored with much less care about whether it should be, vastly increasing overhead and often reducing access to meaningful data. Similarly, cheap, high-capacity memory and fast processors have allowed much less-efficient programming techniques to prosper. Basic tasks can often take more time than they did 20 years ago.

And that is when everything works as it should: ever more capable hardware spawns ever more complex software. More complex software is harder to test and likely to contain more bugs buried deeper in sub-sub-routines, only to emerge years later (in the middle of my first paid programming gig, the client freaked out because the US east-coast telecom grid went down hard for several days due to a missing semicolon in something like a couple of million lines of code).

Possibly worse still, advancing technology creates its own mythology, of which "AI" is a prime example. Requirements start to get written around what the tech claims to be able to do, rather than around what is needed for a particular purpose. Advertisers spend huge amounts on trackers and databases and analytics software that tracks and classifies every aspect of our lives, often erroneously. Yet other, smaller companies make higher margins from advertising with no tracking at all other than numbers of visits to web sites. We have seen similar myths before. In the 1930s, the new power-driven turret was was to insure that "the bomber will always get through". In the 1950s, the long-range, beyond-visual-range, radar-guided, air-to-air missile was to end the need for guns and the dogfights. Etc.

Finally, more capable hardware has also led to less attention to usability and user interfaces. User interfaces have standardized on what is cheapest and most common rather than on what is the best way to manage information transfer. A human pilot may be able to task and control "loyal wing men" in tests and on the range, but, in combat, information overload and distraction are likely to be huge issues. I think it was Robin Olds who described going into combat in a then high-tech F-4 as a matter of turning off the radar warning system, turning off radio channels, and, most importantly, turning off the guy in back's microphone so that he could concentrate. The loyal wingman takes Olds' problem with technology to a whole new level--whether or not the pilot is a programmer trying to imagine all fo the variable of a dynamic combat environment, a guy at desk in the Arizona desert trying to fly a mission in the Far East, or a pilot in a cockpit.

So technological advance is no guarantee of improved capability or performance. Some things improve. Others don't. Which is which depends on the requirements (knowing what you are trying to do) and on thoughtful implementation (how well you match tools and techniques to tasks). The "loyal wing man" projects appear to choose the tool first and then tailor the requirements to fit the tool--the classic case where everything looks like a nail

kaiserd

I really should change my personal text

- Joined

- 25 October 2013

- Messages

- 1,656

- Reaction score

- 1,788

There is plenty of truth in the overselling of “AI” and the misleading presentation of greater autonomy as artificial thinking.

However a lot of the other comments above appears to be little more than technophobia misrepresented as something more reasoned and reasonable.

Not all technological change is good. Sometimes technological change is rushed when it’s not entirely ready. Some (most?) technological changes will prove to have pros and cons that evolve over time (as does the technology).

But a Luddite position that all technological change is inherently and unavoidably bad is unconnected to history or reality.

Anything done poorly will almost certainly perform poorly.

Any UCAV that is implemented with poor conception and implementation around what it is for and what it can actually do is clearly not going to do well.

But you can equally say the same thing about manned aircraft who are (almost) equally built around and are entirely reliant on much the same advanced technology.

And the argument that an unmanned “loyal wingman” is being sold as superior to a manned one is equally a straw-man argument.

It’s not being sold as superior in performance and flexibility versus its manned equivalent (it’s not) - it’s being sold as cheaper and more expendable - to help the manned platform survive and undertake its task rather than seeing more manned platforms shot down and pilots killed. It can be risked closer to threats etc. than airforces will be willing to send their manned aircraft.

It may well be that this initial generation of loyal wingmen may be relatively limited in their capabilities and not live up to their current hype and be bought in relatively small numbers. However as long as they are implemented and used within what they do offer (and they’re not incorrectly prioritised and/ or deployed) then they can help lead to subsequent generations of increasingly capable unmanned combat aircraft. The associated technology is not getting un-invented any time soon.

However a lot of the other comments above appears to be little more than technophobia misrepresented as something more reasoned and reasonable.

Not all technological change is good. Sometimes technological change is rushed when it’s not entirely ready. Some (most?) technological changes will prove to have pros and cons that evolve over time (as does the technology).

But a Luddite position that all technological change is inherently and unavoidably bad is unconnected to history or reality.

Anything done poorly will almost certainly perform poorly.

Any UCAV that is implemented with poor conception and implementation around what it is for and what it can actually do is clearly not going to do well.

But you can equally say the same thing about manned aircraft who are (almost) equally built around and are entirely reliant on much the same advanced technology.

And the argument that an unmanned “loyal wingman” is being sold as superior to a manned one is equally a straw-man argument.

It’s not being sold as superior in performance and flexibility versus its manned equivalent (it’s not) - it’s being sold as cheaper and more expendable - to help the manned platform survive and undertake its task rather than seeing more manned platforms shot down and pilots killed. It can be risked closer to threats etc. than airforces will be willing to send their manned aircraft.

It may well be that this initial generation of loyal wingmen may be relatively limited in their capabilities and not live up to their current hype and be bought in relatively small numbers. However as long as they are implemented and used within what they do offer (and they’re not incorrectly prioritised and/ or deployed) then they can help lead to subsequent generations of increasingly capable unmanned combat aircraft. The associated technology is not getting un-invented any time soon.

shin_getter

ACCESS: Top Secret

- Joined

- 1 June 2019

- Messages

- 1,323

- Reaction score

- 1,972

If revolutionary technology fails to work as promised, just dig up old stuff. That is not nice, but do not result in decisive defeats.

The failure to adapt to revolutionary technology can result in huge disaster whose outcomes can not be predicted by those that fail to understand the revolutionary nature of tech.

What is warfighting in the AI era? Things like tactics and much of strategy turns into a "software" problem. The proper combat force isn't some "commanders" buying some airplanes from an vendor and tell the airplanes to shoot at some stuff. The proper combat force in the AI environment is an force of programmers, computing clusters, data collection and modelling people doing dynamic system updates to defeat the opponent system, the entire combat system is engineered with electronic and human intelligence parts.

The maneuver space isn't "air plane go north", but "combat force execute combat action" to "enable collection of opponent AI-Command-control-processing system architecture" to find flaws that enable the crafting of "adversarial inputs" that errors in opponent AI. The strategic adjustment OODA cycle minimized to hours (wall clock time cycle for google-AI training timeframes, in mere 2022), while patching is an activity that has to happen at the same time as hundred airplane furballs.

A battle would be a constant state of software updates to plug weakness and induce weakness in the opponent.

The potential for hyper fined grained, completely centralized campaign also enable absurdly fine and long time frame considerations that can be brute force into being with stupid amount of compute to solve extensive game theory problems.

For example, I expect the profiling of all human aviators (if skill differential is notable) and systems that enable real time identification via non-cooperative means. There'd be tactical "interactions" to collect this info and other things, and considerations in defeating/neutralizing each "human constraint" would be part of the combat model.

-----------

Of course, the first job of any AI based combat system is to increase the tempo of combat information processing requirement to exceed the capabilities of human-voice communication to deal with, overwhelm and collapse the legacy system. Force enemy into automation, and defeat the opponent automation with superior warfighting capability in this domain.

If there is a tactical warfighter, it would involve ability to recover AI that has bugged behavior during combat or predict weakness in AI behavior by observation of tactical situation is a skill that needs development, and is completely alien to military organizations.

The military does not even know how to think in AI centric warfare let alone implement it when it becomes feasible. Thankfully that applies to all militaries.

The failure to adapt to revolutionary technology can result in huge disaster whose outcomes can not be predicted by those that fail to understand the revolutionary nature of tech.

It totally has happened, the loyal wing man concept is exactly what entrenched flyboys would pitch given threats from technology.And, hopefully, you realize that USAF tests are often notoriously biased towards the currently fashionable, big-budget option--always have been. When tests do not produce the intended result, moreover, all the services have a tendency to stop them, change the rules, and try again until they do.

The "loyal wing man" concept itself may be just such a politically motivated attempt at institutional self-protection. Politicians and vendors trumpet the potential of remote- and software-controlled drones as cheaper, politically less sensitive replacements for manned aircraft. So the traditional air force flyboys coopt the technology and write a requirement that makes it a mere adjunct to the flesh-and-blood aviator.

What is warfighting in the AI era? Things like tactics and much of strategy turns into a "software" problem. The proper combat force isn't some "commanders" buying some airplanes from an vendor and tell the airplanes to shoot at some stuff. The proper combat force in the AI environment is an force of programmers, computing clusters, data collection and modelling people doing dynamic system updates to defeat the opponent system, the entire combat system is engineered with electronic and human intelligence parts.

The maneuver space isn't "air plane go north", but "combat force execute combat action" to "enable collection of opponent AI-Command-control-processing system architecture" to find flaws that enable the crafting of "adversarial inputs" that errors in opponent AI. The strategic adjustment OODA cycle minimized to hours (wall clock time cycle for google-AI training timeframes, in mere 2022), while patching is an activity that has to happen at the same time as hundred airplane furballs.

A battle would be a constant state of software updates to plug weakness and induce weakness in the opponent.

The potential for hyper fined grained, completely centralized campaign also enable absurdly fine and long time frame considerations that can be brute force into being with stupid amount of compute to solve extensive game theory problems.

For example, I expect the profiling of all human aviators (if skill differential is notable) and systems that enable real time identification via non-cooperative means. There'd be tactical "interactions" to collect this info and other things, and considerations in defeating/neutralizing each "human constraint" would be part of the combat model.

-----------

Of course, the first job of any AI based combat system is to increase the tempo of combat information processing requirement to exceed the capabilities of human-voice communication to deal with, overwhelm and collapse the legacy system. Force enemy into automation, and defeat the opponent automation with superior warfighting capability in this domain.

If there is a tactical warfighter, it would involve ability to recover AI that has bugged behavior during combat or predict weakness in AI behavior by observation of tactical situation is a skill that needs development, and is completely alien to military organizations.

The military does not even know how to think in AI centric warfare let alone implement it when it becomes feasible. Thankfully that applies to all militaries.

Well before we get to high level of AI controlled combat, AI will (probably is) absorbing huge amounts of disparate sensor data, correlate common signals across that data set (a satellite image, a SAR map, and an emission source all located at the same spot for instance) and provide a prioritized target list. The army is trying to do this with an AI software named Prometheus. The follow to that is feeding an AI a list of available platforms and targets so that it can solve the traveling salesman problem of engaging the largest number of prioritized targets in the shortest amount of time. The army name for this software is apparently SHOT. I’m sure the USAF has an equivalent.

In_A_Dream

ACCESS: Top Secret

- Joined

- 3 June 2019

- Messages

- 763

- Reaction score

- 861

I don't think the military really needs to get surgical with AI controlled assets. Simply overwhelming an enemy force with expendable drones is enough to do the job. I believe this is the course China is taking regarding carrier air groups and the Pacific bases. Just pepper them all with cheap drops/munitions. Maybe they'll even be ICBM delivered.

But back to the 6th gen discussion, it'll be interesting to see how the central-control manned aircraft turns out. I think the plan for the USAF is to have 3-6 escort unmanned escort aircraft. Not sure what the Navy is planning since it's a little different to launch and recover that many aircraft, they'll probably have a lower escort count. Probably a more agile fighter. Size will also be an important consideration.

I do think the Navy should have a separate, smaller carrier to launch and recover drones. And for the Aegis cruiser to have command and control capability along with whatever aircraft they're developing.

But back to the 6th gen discussion, it'll be interesting to see how the central-control manned aircraft turns out. I think the plan for the USAF is to have 3-6 escort unmanned escort aircraft. Not sure what the Navy is planning since it's a little different to launch and recover that many aircraft, they'll probably have a lower escort count. Probably a more agile fighter. Size will also be an important consideration.

I do think the Navy should have a separate, smaller carrier to launch and recover drones. And for the Aegis cruiser to have command and control capability along with whatever aircraft they're developing.

If you read my remarks at all carefully, I can hardly be called a Luddite or a technophobe. I make my living from computer technology.There is plenty of truth in the overselling of “AI” and the misleading presentation of greater autonomy as artificial thinking.

However a lot of the other comments above appears to be little more than technophobia misrepresented as something more reasoned and reasonable.

Not all technological change is good. Sometimes technological change is rushed when it’s not entirely ready. Some (most?) technological changes will prove to have pros and cons that evolve over time (as does the technology).

But a Luddite position that all technological change is inherently and unavoidably bad is unconnected to history or reality.

Anything done poorly will almost certainly perform poorly.

Any UCAV that is implemented with poor conception and implementation around what it is for and what it can actually do is clearly not going to do well.

But you can equally say the same thing about manned aircraft who are (almost) equally built around and are entirely reliant on much the same advanced technology.

And the argument that an unmanned “loyal wingman” is being sold as superior to a manned one is equally a straw-man argument.

It’s not being sold as superior in performance and flexibility versus its manned equivalent (it’s not) - it’s being sold as cheaper and more expendable - to help the manned platform survive and undertake its task rather than seeing more manned platforms shot down and pilots killed. It can be risked closer to threats etc. than airforces will be willing to send their manned aircraft.

It may well be that this initial generation of loyal wingmen may be relatively limited in their capabilities and not live up to their current hype and be bought in relatively small numbers. However as long as they are implemented and used within what they do offer (and they’re not incorrectly prioritised and/ or deployed) then they can help lead to subsequent generations of increasingly capable unmanned combat aircraft. The associated technology is not getting un-invented any time soon.

What I decry is the cheerleading by those that read marketing slicks--press releases, white papers, and the like, from both vendors and service public affairs offices--without understanding, even at a very high level, what the technology actually is or even can be.

Something called a "loyal wing man" may be bought and fielded. But I very much doubt that it will be what is being sold now, simply because it can't be. So we aren't talking about rejecting technology. We are talking about rejecting smoke and mirrors and the accompanying techno-piety that views all criticism as a sort of heresy.

PR terms like "AI" and "loyal wing man" anthropomorphize machines in ways that trick the unwary into crediting them with powers they do not have (yet), powers that policy makers may come to rely later. This can have disastrous consequences.

The powered gun turret that I referred to above was '30s high tech. But it wasn't what it was sold to be or believed to be. Nor was it the technology that would let "the bombers always get through"--that was the Mosquito. But the mythical powers of the gun turret were too firmly entrenched in the belief systems of wartime western air forces to be challenged by mere realities. RAF Bomber Command actually commissioned an Operations Research unit to investigate the reasons and potential solutions for its appallingly high losses to German night fighters. While serving with this unit, the famous future physicist Freeman Dyson found a simple, real-world solution using accepted statistics and basic math: strip the turrets out of the bombers. This would have a two-fold effect:

- It would reduce drag and weight and thus increase the bomber's speed just enough to make successful interception by German night fighters statistically impossible

- It would drastically reduce casualties by removing the gunners and thus downsizing the crews by approximately half.

Indeed. Ukraine's Turkish-made Bayraktars seem to be little more sophisticated than cutting-edge hobbyist equipment. They have a "ludicrously" small payload. Yet they have been perhaps the most successful combat drones in history, while operating in the face of the much vaunted air defenses of the West's most sophisticated opponent. Actual, quadcopter hobbyist drones have proved decisive for artillery spotting and scouting for tank hunting teams. Some have even been used as ultralight bombers.I don't think the military really needs to get surgical with AI controlled assets. Simply overwhelming an enemy force with expendable drones is enough to do the job.

The value of these cheap platforms has derived not from the technology itself, essential though that is, but from the imaginative way in which they have been used to gain leverage on the real-world, here-and-now battlefield. The Ukrainians have skillfully matched the limited capabilities and payloads offered by the technology to the available range of targets, taking into account potential countermeasures.

The Ukrainians understand this technology--what it is, what it can do and what it is not and cannot do.

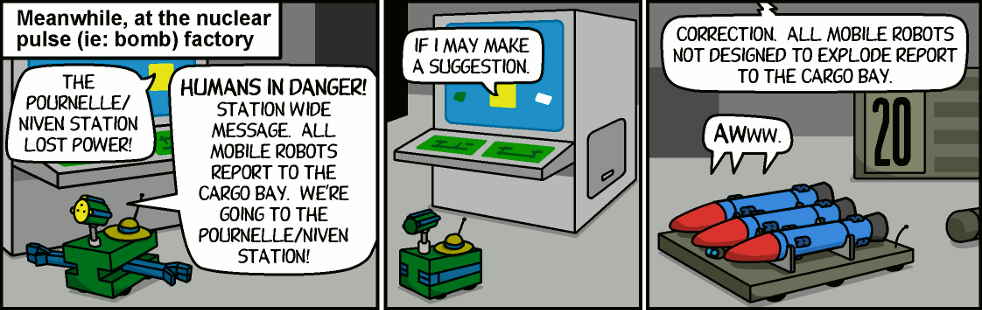

There is a non silly side to this. If you are on the receiving end of an "AI" mediated friendly fire incident or find yourself colliding with a "loyal wing man", it might as well be nuclear from your point of view. Skynet presumed a malevolent intelligence. But what if the "AI" in question is not intelligent--only presumed to be--and is thus just a machine that can on the fritz, like your office thermostat. Do you really want it to have responsibilities?Come on, what's the worst that can happen? Some technicians trying to pull the plug once the network becomes self-aware, and the network initiating a nuclear exchange?Will the neural network fly it's combat missions with a perfect record before the US hands over the entirety of it's nuclear deterrent to it?

And that truly scares me, the thought that an AI computer is in charge of the US nuclear deterrent. Nope, I hope that never happens.

Nonsense.

I suggest we call it Skynet.

One question: what is the "the profiling of all human aviators"? How is it done? What attributes, methods, and parameters do you include? How, for example, do you measure "skill" in order to differentiate it? What is "skill" in this context? What units, instruments, and protocols do you use when doing the measuring? Are we counting G-tolerance? eyesight aerobatic ability? ability to calculate fuel burn? navigational skill? Tactics? Strategy" Knowledge of rules of engagement/military law/international law? Good judgment? And, fi we are, how do we balance them against each other when arriving at a "profile"? Are the units and measuremtn methods appropriate to each common to all?The potential for hyper fined grained, completely centralized campaign also enable absurdly fine and long time frame considerations that can be brute force into being with stupid amount of compute to solve extensive game theory problems.

For example, I expect the profiling of all human aviators (if skill differential is notable) and systems that enable real time identification via non-cooperative means. There'd be tactical "interactions" to collect this info and other things, and considerations in defeating/neutralizing each "human constraint" would be part of the combat model.

No doubt the above barely scrapes the surface of some very complicated details. But the difference between technology and science fiction, science and magic lies in such details.

This is my core critique of "AI", as practised today. It pretends to be something that it cannot rigorously define. No one has come up with a reasonable definition of "intelligence". And without that, how do you know what you have implemented?

Dreamflyer

'Senior Something'

- Joined

- 13 July 2008

- Messages

- 553

- Reaction score

- 908

No one has come up with a reasonable definition of "intelligence".

In this context, I would describe 'intelligence' as the ability to make decisions based on data which is collected and/or gets fed, and the ability to have a positive or negative influence on the own future and/or the future of other things/beings based on the outcomes of already made decisions and on additional data, without having any self-awareness, emotions, hopes or desires.

So, somewhat like the behavior of my 17-year old cat.

- Joined

- 19 July 2016

- Messages

- 4,672

- Reaction score

- 4,090

Your 17 year old cat is probably, a bit of an IQ genius compared to many folk out there. Some of those are in quite powerful and influential positions. Perhaps the nezt G7 leaders should have a different dietary requirement.....

In other words, the measure of inteligence definitely needs a rethink which will be difficult given the people who are in a position to decide what inteligence is now.

In other words, the measure of inteligence definitely needs a rethink which will be difficult given the people who are in a position to decide what inteligence is now.

Russia isn't much of an opponent. Hasn't been for over 3 decades. I would not make decisions about the air power of the USA based on russia. In under 30 years the USAF will have fielded 3 new fighters and Russia still struggles with one new idk what to call it... 4.5 gen aircraft.Indeed. Ukraine's Turkish-made Bayraktars seem to be little more sophisticated than cutting-edge hobbyist equipment. They have a "ludicrously" small payload. Yet they have been perhaps the most successful combat drones in history, while operating in the face of the much vaunted air defenses of the West's most sophisticated opponent. Actual, quadcopter hobbyist drones have proved decisive for artillery spotting and scouting for tank hunting teams. Some have even been used as ultralight bombers.I don't think the military really needs to get surgical with AI controlled assets. Simply overwhelming an enemy force with expendable drones is enough to do the job.

The value of these cheap platforms has derived not from the technology itself, essential though that is, but from the imaginative way in which they have been used to gain leverage on the real-world, here-and-now battlefield. The Ukrainians have skillfully matched the limited capabilities and payloads offered by the technology to the available range of targets, taking into account potential countermeasures.

The Ukrainians understand this technology--what it is, what it can do and what it is not and cannot do.

Dreamflyer

'Senior Something'

- Joined

- 13 July 2008

- Messages

- 553

- Reaction score

- 908

Your 17 year old cat is probably, a bit of an IQ genius compared to many folk out there.

That´s why her name is Skycat.

apparition13

I really should change my personal text

- Joined

- 27 January 2017

- Messages

- 728

- Reaction score

- 1,342

A Douglas-Grumman hybrid, eh?Your 17 year old cat is probably, a bit of an IQ genius compared to many folk out there.

That´s why her name is Skycat.

Dreamflyer

'Senior Something'

- Joined

- 13 July 2008

- Messages

- 553

- Reaction score

- 908

A Douglas-Grumman hybrid, eh?Your 17 year old cat is probably, a bit of an IQ genius compared to many folk out there.

That´s why her name is Skycat.

I also have a dog, you know. You know what he´s called?

Last edited:

- Joined

- 19 July 2016

- Messages

- 4,672

- Reaction score

- 4,090

Something to do with a shovel? I have an orphan Badger that visits, I call her Snarler.........

- Joined

- 6 November 2010

- Messages

- 6,019

- Reaction score

- 7,571

SkyWulf?A Douglas-Grumman hybrid, eh?Your 17 year old cat is probably, a bit of an IQ genius compared to many folk out there.

That´s why her name is Skycat.

I also have a dog, you know. You know what he´s called?

Russia isn't much of an opponent. Hasn't been for over 3 decades. I would not make decisions about the air power of the USA based on russia. In under 30 years the USAF will have fielded 3 new fighters and Russia still struggles with one new idk what to call it... 4.5 gen aircraft.Indeed. Ukraine's Turkish-made Bayraktars seem to be little more sophisticated than cutting-edge hobbyist equipment. They have a "ludicrously" small payload. Yet they have been perhaps the most successful combat drones in history, while operating in the face of the much vaunted air defenses of the West's most sophisticated opponent. Actual, quadcopter hobbyist drones have proved decisive for artillery spotting and scouting for tank hunting teams. Some have even been used as ultralight bombers.I don't think the military really needs to get surgical with AI controlled assets. Simply overwhelming an enemy force with expendable drones is enough to do the job.

The value of these cheap platforms has derived not from the technology itself, essential though that is, but from the imaginative way in which they have been used to gain leverage on the real-world, here-and-now battlefield. The Ukrainians have skillfully matched the limited capabilities and payloads offered by the technology to the available range of targets, taking into account potential countermeasures.

The Ukrainians understand this technology--what it is, what it can do and what it is not and cannot do.

This. By 2027, Norway-Finland-Poland (all on Russia’s border) will have 150 F-35s compared to Russia’s contracted 75 Su-57s, assuming that they are delivered on time. US AirPower should be solely designed with China and the Pacific theater in mind.

shin_getter

ACCESS: Top Secret

- Joined

- 1 June 2019

- Messages

- 1,323

- Reaction score

- 1,972

The answer is: All of them. Every piece of data that can be collected will be thrown into a model and big data systems will be used to extract maximum value out of the information.One question: what is the "the profiling of all human aviators"? How is it done? What attributes, methods, and parameters do you include? How, for example, do you measure "skill" in order to differentiate it? What is "skill" in this context? What units, instruments, and protocols do you use when doing the measuring? Are we counting G-tolerance? eyesight aerobatic ability? ability to calculate fuel burn? navigational skill? Tactics? Strategy" Knowledge of rules of engagement/military law/international law? Good judgment? And, fi we are, how do we balance them against each other when arriving at a "profile"? Are the units and measurement methods appropriate to each common to all?For example, I expect the profiling of all human aviators (if skill differential is notable) and systems that enable real time identification via non-cooperative means. There'd be tactical "interactions" to collect this info and other things, and considerations in defeating/neutralizing each "human constraint" would be part of the combat model.

You first start without your own pilots, collect data on building predictive models on tactically relevant factors and observables, ideally parameters you can observe in the enemy while fighting. After observing things like minimum energy loss missile evasion or Complex maneuvers at high G or fast response to expected detection of friendly forces, opponent model gets built up, both over time and instantly updated to the entire tactical fleet. This information can be cross correlated with things like personnel databases, peace time data collection and such to enable potentially identification of individual pilots by external observation in real time.

But that would just a small side project in the identify contacts by means of data fusion. Sensor data is insufficient in a world of VLO inside swarms of decoys, instead vehicle behavior and relative positions will be fed into the model to improve predictions. A naive opponent following simple command and control logic would get his decoys, his high bandwidth remote controlled drones, his manned command and control aircraft identified by observation of behavior, and get tactics made to counter, from saturating command nodes, finding ideal locations to insert jamming assets, and finding coordination issues between command nodes, predictable and exploitable behavior to threats and so on. The Kosovo F-117 shootdown was the simplest of patterns, in the world of bigger data there is far more patterns to pick up.

A smart opponent would find means to deceive you, and fleet scale tactics grow in complexity as manned aircraft pretend to be drones and drones pretend to be manned. Side-band information like human sleep cycles, best guess on aircraft maintenance time requirements would be combined with battle damage information, models of opponent logistics capabilities and so on across the entire front to figure out optimal force employment: everything from wearing out enemy air fleet to setup a maximized attack at point of low availability to creating local superiority due to predictable scramble patterns given a day of week all can be worked out.

The details is not something you can know beforehand, you can just do you best to collect and plan, and stumble up exploitable information once a while. The details is warfighting itself, and both sides in a competitive fight will be doing everything to improve their understanding, modeling of the opponent while doing their best to deceive the opponent.

Trillions of data points will be collected, thousands of ideas and models explored, hundreds of software changes is to be expected throughout a campaign. Most of the data, ideas and models would be failures that does nothing useful, but only a few of them needs to be effective for significant military advantages, and with modern big data infrastructure, exploring and using all that data and ideas is cheaper than ever.

The art of war is not something you train before the war and execute by memory. The ability for AI systems to go beyond peak human abilities in tactics in a day of wall clock time with only a few mil of compute means that the art of war can be developed in real time with the particulars of a conflict pinned down right at the point in time.

-------

The air force making AI as lower bandwidth RC airplanes is a far cry from all integrating hyper-informational model that seeks to outthink opponent on all levels of conflict across all domains that would be the end game to AI technology. Robot airplanes is just a more reliable actuators to such a model.

You don't need "intelligence", you just need behavior that leads to fulfillment of objectives. Design a scoring function on a model of reality and it reduces to a optimization problem that you can use a world of tools to compute.This is my core critique of "AI", as practised today. It pretends to be something that it cannot rigorously define. No one has come up with a reasonable definition of "intelligence". And without that, how do you know what you have implemented?

So, we somehow "outthink [an] opponent" without "intelligence"? This is just the kind of loose talk that drives sloppy "AI" "solutions" to non-problems.The answer is: All of them. Every piece of data that can be collected will be thrown into a model and big data systems will be used to extract maximum value out of the information

You first ... collect data on building predictive models on tactically relevant factors and observables, ideally parameters you can observe in the enemy while fighting. ... opponent model gets built up, ... cross correlated with things like personnel databases, peace time data collection and such ...

But that would just a small side project in the identify contacts by means of data fusion.... <snip>

The details is not something you can know beforehand, you can just ... stumble up exploitable information once a while. ...

Trillions of data points will be collected, thousands of ideas and models explored, hundreds of software changes is to be expected throughout a campaign. ...

-------

The air force making AI as lower bandwidth RC airplanes is a far cry from all integrating hyper-informational model that seeks to outthink opponent on all levels of conflict across...

You don't need "intelligence", you just need behavior that leads to fulfillment of objectives. Design a scoring function on a model of reality and it reduces to a optimization problem that you can use a world of tools to compute.This is my core critique of "AI", as practised today. It pretends to be something that it cannot rigorously define. No one has come up with a reasonable definition of "intelligence". And without that, how do you know what you have implemented?

"Every piece of data that can be collected will be thrown into a model and big data systems will be used to extract maximum value out of the information"?

Seriously? "Data" is not "information". Collecting all of it without defining what you are looking for creates noise, not knowledge. For example, the number of nose hairs in an average pilot's left nostril and the same pilot's visual acuity are both data. Whether either or both or neither is information is something we cannot determine until we have defined the question that we are trying to answer and done some controlled experimentation. The same goes for all "observables": we've know since Francis Bacon that uncritical, empirical observation is not knowledge and does not answer scientific questions. Empirical evidence is a mixture of noise (lots) and signal (very little). Without well-considered and well-constructed filters, you have nothing.

So you can't just blithely refer to "tactically relevant factors" in passing. Until you have defined what, exactly, is relevant, tactically or otherwise, and tested your definition with controlled experiments, data--observables--are useless. The buzz words "AI", "model", "Big Data", "data fusion" are supposed to dazzle us with visions of super tech. But all they do is obscure the difference between information processing and random data gathering.

You imply that we cannot define requirements with adequate granularity because we cannot know the future, but must instead proceed from vague requirements "like ""data fusion"" in the hopes that more precise requirements will emerge eventually by pure chance: "The details is not something you can know beforehand, you can just ... stumble up exploitable information once a while." But it is because we cannot know the future that we formulate hypotheses, test them under controlled conditions, and then define specific requirements for dealing with the most likely future outcomes. Understanding precisely what happens now is our best guide to what may happen next--and the basis of science.

Perhaps a slightly more detailed example of the real-world that lies behind "AI" and "big-data" hype will help. I started in my current profession as a database programmer. Databases work because you start with a specific problem that the database is supposed to solve--the more specific the definition, the higher the likelihood of success. You then define a set of expected relationships between data points and set up tables, keys, indices, etc. accordingly. When ready, you test the results. In my first case, the subscribers got their issues on time, the publisher got its subscription fees, and I got my fee. Success! My model of the client's business worked. From what I have seen subsequently, vastly larger and more complex corporate databases are developed and work in exactly the same way.

Ignoring the original design, purpose, and structure of a databases undermines its utility and validity. By doin so, you essentially strip it of ithe controls and and logical relationships that make information out of data. Terms like "AI", "big data", "data mining", and "data fusion" obscure this reality to sell systems. Corporate suits can believe that they can gain low-cost , spontaneous "insights" simply by munging all their old databases together. Value for nothing, almost. Credit reporting companies, advertisers, and surveillance-state types in our governments love this approach because it is easy to understand (if you do not think to much) and seems to offer extra return on the investment they made in the original databases. Throw enough miscellaneous data together, stir, and wait for the goodness to emerge from the soup--like magic.

And magical thinking it is. The purpose and design of the collection, storage, and processing system determines the information value of the collected data. Combine them uncritically under the "throw-it-all -in" big-data model and you lose any possibility of drawing reliable conclusions. You take away the order and the result is just noise. Patterns will emerge from this chaos, just as the "big-data" experts claim--humans find patterns in the tile floors of public restrooms--but their will be no reason to think that the patterns are meaningful/useful/actionable and no way to test them. Acting upon a revelations revealed on the men's room floor is no the way to achieve greatness. (Think about this the next time a corporate leader talks about "data-driven decisions",)

My direct experience with actual "big-data" work is admittedly limited but also revealing. I joined a project that was sold on the basis of "AI". My job was to document the different parts of a large, complex integration for the client. One problem emerged: I could not figure out what the "AI", the "predictive analytics" software installed on client systems actually did. It did not seem to be connected to anything. It turned out that "AI" was a required part of the bid, but had nothing to do--it was, euphemistically speaking, a "future capability" that was included to keep non-technical suits happy, both in our company and in the client's. The "data-mining" also did not proceed per the marketing team's "big-data" pitch. The client's many databases were not "combined" (munged). Instead, a team of database analysts with a decade plus of experience in the client's line of business systematically copied and dismantled the original databases, rigorously classified the data, and then transferred the contents field-by-field to a new database that was purpose-designed for use with analytic algorithms designed specifically for the customer's business problem. A classic database solution that met the client's real requirements., while ignoring the meaningless "AI" in the original request for proposals.

There are no shortcuts for achieving the kind of performance that you want from your robot airplanes. Achieving it (if it is achievable) will be an enormous and, yes, dangerous undertaking, not because of the rise of Skynet but because of the potential costs and consequences that result from errors in large, complex systems. You cannot count on technical advance to somehow spare you the effort of defining requirements, because that effort is technical advance.

Dreamflyer

'Senior Something'

- Joined

- 13 July 2008

- Messages

- 553

- Reaction score

- 908

SkyWulf?A Douglas-Grumman hybrid, eh?Your 17 year old cat is probably, a bit of an IQ genius compared to many folk out there.

That´s why her name is Skycat.

I also have a dog, you know. You know what he´s called?

Tomahawk.

AI as it stands now doesn’t think or necessarily make decisions well. What it can do, with unnerving accuracy, is find patterns in vast data sets in near real time. One of my favorite examples is Amazon software literally predicting pregnancy by shopping patterns, before the person involved necessarily knows.

Random is just a word we use to describe situations where humans can’t find a pattern. AI isn’t truly intelligent or sentient but it does have the capability to detect patterns in vast, complicated datasets and react accordingly or else suggest a course of action.

Random is just a word we use to describe situations where humans can’t find a pattern. AI isn’t truly intelligent or sentient but it does have the capability to detect patterns in vast, complicated datasets and react accordingly or else suggest a course of action.

- Joined

- 3 September 2006

- Messages

- 1,521

- Reaction score

- 1,640

IIRC it was Target, not Amazon, and it was before the teen's father knew. She did now.AI as it stands now doesn’t think or necessarily make decisions well. What it can do, with unnerving accuracy, is find patterns in vast data sets in near real time. One of my favorite examples is Amazon software literally predicting pregnancy by shopping patterns, before the person involved necessarily knows.

As for the relevance of findings by big data, one shining example is about the root cause of death in humans. Death is found to be correlated with 100% accuracy to ... birth.

100% correlation is hard to argue with!

aonestudio

I really should change my personal text

- Joined

- 11 March 2018

- Messages

- 3,323

- Reaction score

- 8,957

Air Force selects future aircrew helmet

The helmet prototype was chosen after Air Combat Command initiated the search for a next-generation helmet to address issues with long-term neck and back injuries, optimize aircraft technology,

www.af.mil

- Status

- Not open for further replies.

Similar threads

-

Japanese next generation fighter studies (aka i3, F-3)

- Started by bobbymike

- Replies: 584

-

CNO Greenert Warns Congress of Fighter Shortfall, Hornet Line to Close 2017

- Started by Triton

- Replies: 1

-

Russian 6th Generation Fighter News

- Started by bobbymike

- Replies: 41

-

-