You do realize there has been an actual AI vs human dogfight test by the USAF and that the AI won all five times, right?

But that was in a simulator, it wasn't a live package fitted into a real UCAV and actually operating in real 3D space or reliant on a potentially vulnerable datalink. Indeed DARPA stated that it was possibly being 10 years away from being ready to actually 'fly' a fighter in combat.

There were some flaws, such as not observing 500ft separation distances which meant that in real combat some of those AI drones (having been programmed as 'expendable') would have flown through debris fields from their kills and actually risk damaging or downing themselves in the process. A Loyal Wingman has to be loyal and on your wing, if it dies in its own fratricide then its not really useful as a reliable wingman.

The software has to be run in the UCAV unless you want to jam up the network so that adds cost to the drone. If it is shot down, potentially your adversary can access the AI system and find out its weaknesses. That means programming it make sure its not expendable and therefore the AI must be as concerned about its own life preservation as a human and therefore desist from Hollywood epic style stunts. It can probably still perform better in dogfights than a fighter constrained by human physiology but it might blunt the edge.

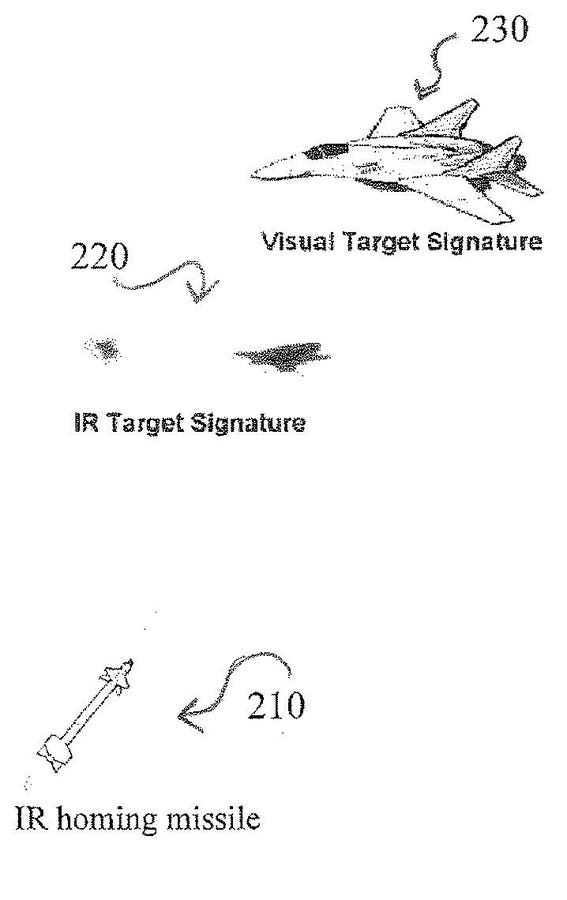

Besides wouldn't a smart AI think that dogfighting is a waste of effort and no go for the long-range sniper kill if it could? One of the AI systems tested went in for the close-in cannon kill option every time, but is that necessarily the best way? Yes these systems learn but are they necessarily learning the best methods? Lots of work to be done I feel before we can elevate these from high-end gaming software to real fighter pilot brains.